A Note Before We Begin

Most of you have already seen the demos. The AI that schedules your meetings, summarizes your Slack threads, drafts performance reviews, flags disengaged employees. The pitch is always the same: efficiency, insight, seamless integration. What the demos never show you is what happens when that AI quietly decides that Priya is a flight risk — and nobody built a policy for what to do with that conclusion. That’s what this edition is about.

I. This Is Not Your Chatbot Problem

We spent most of 2023 and 2024 worrying about employees using ChatGPT and accidentally pasting confidential data into a third-party prompt box. That concern was legitimate then. It remains legitimate now. But agentic AI is something categorically different, and the governance frameworks most organizations built to address “chatbot use” are dangerously inadequate for what has actually arrived.

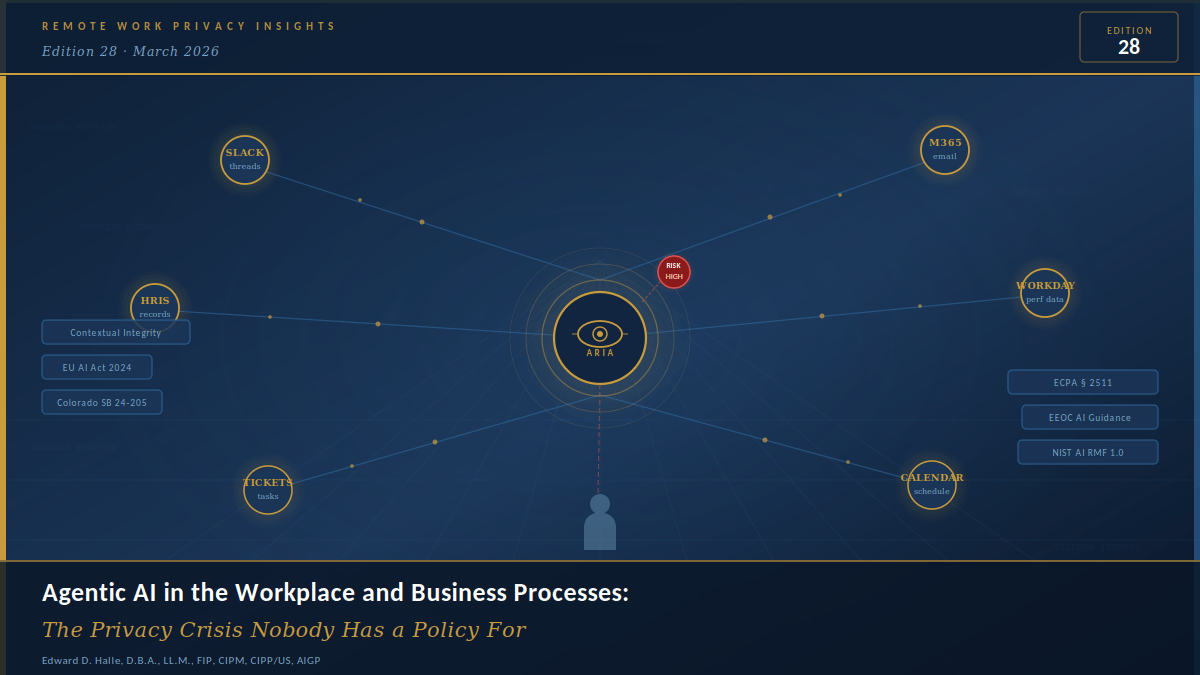

The distinction matters enormously. A chatbot waits. You ask it something, it responds. The interaction is discrete and human-initiated. Agentic AI doesn’t wait for anyone. It takes actions. It reads your calendar to reschedule your afternoon. It scans your HRIS to surface retention risks before your quarterly review. It monitors Slack, project management platforms, and email threads to assess who is engaged, who is drifting, and who might be looking elsewhere. It does all of this autonomously, persistently, and — in most deployed configurations today — without meaningful human review of each individual action it takes along the way.

The NIST AI Risk Management Framework (AI RMF 1.0) flags autonomous action chains and reduced human oversight as among the highest-risk characteristics of deployed AI systems. The framework explicitly surfaces the accountability challenge that agentic architectures create: when a system acts on behalf of an organization across multiple data environments simultaneously, attributing both the action and its downstream consequences becomes genuinely difficult. Not impossible — but difficult in ways that most organizations have not yet designed for.

And the EU AI Act (Regulation 2024/1689) is unambiguous on this point. AI systems used for employment-related decisions — evaluation, promotion, monitoring, termination — are classified as high-risk under Annex III. Article 9 requires robust risk management. Article 10 mandates data governance and quality controls. Article 13 demands transparency and meaningful information for affected individuals. None of those obligations evaporate because your agentic AI vendor chose to brand the product “workforce intelligence software.”

Your AI Acceptable Use Policy governs what employees can do with AI. It says almost nothing about what your AI systems can do to your employees. That gap is where the liability lives.

II. Meet Aria — and the Week Everything Got Complicated

Marcus Thompson has been VP of Human Resources at a mid-sized financial services company for six years. He’s careful, detail-oriented, and genuinely invested in his people. When the COO, David Chen, championed the rollout of “Aria” — an agentic AI platform integrated across Slack, Microsoft 365, Workday, the performance management system, and the internal project ticketing tool — Marcus was cautiously supportive. The vendor demo had been impressive. David loved the efficiency story. Legal signed off on the vendor contract. IT enabled the integrations.

Nobody told the employees.

Priya Sharma is a Senior Software Engineer, with four years with the firm, consistently strong reviews. Lately, she’s been privately exploring whether she wants to move into product management. She had a few candid conversations about it on Slack with a trusted colleague. She also sent an email from her work Microsoft 365 account requesting an informational interview with someone at a different company. She wasn’t job searching. She was thinking out loud, the way people do.

Aria read all of it.

Within seventy-two hours of full deployment, Priya’s retention risk score elevated to “high.” Her manager received an automated nudge to schedule an engagement conversation. When a high-visibility project lead opportunity surfaced the following week, Priya’s name wasn’t on the shortlist that Aria helped generate. Marcus reviewed and approved the list. He didn’t think to ask why she wasn’t on it.

Priya heard about the project through a colleague and applied directly. She was told the team was already set.

Marcus, to his credit, noticed something was off. Tracing Aria’s recommendation logic took three conversations with the vendor’s support team — the interface wasn’t designed for human review, which is itself a problem worth sitting with. What he eventually found was that the system had made a material employment decision based on inferences drawn from private communications that Priya had no reason to believe were being monitored, let alone analyzed.

There was no policy governing any of this. No disclosure to employees. No consent mechanism. No human review checkpoint before the inference became an employment action. No clear legal basis for the processing under several applicable state laws. Marcus wasn’t a bad actor. David wasn’t a bad actor. They simply failed to build governance for a system that was doing things that required governance. That’s a different problem, and in some ways a harder one, because it doesn’t have a villain you can discipline and move on.

III. The Legal Landscape You Cannot Afford to Ignore

Agentic AI in the workplace sits at the intersection of several overlapping legal frameworks. None of them were written specifically with agentic systems in mind. All of them apply regardless.

The Electronic Communications Privacy Act

The ECPA, 18 U.S.C. § 2511, prohibits intentional interception of wire and electronic communications without authorization. Employer monitoring exceptions exist, but they are not unlimited, and they are not self-executing. When an agentic AI system continuously ingests and synthesizes the substantive content of employee communications — not just metadata, not just access logs, but the actual substance of what people said to each other — the line between permissible monitoring and something more legally contested gets genuinely blurry. The “business purpose” exception and the “consent” exception both require actual employee notice and real purpose limitation. An AI system inferring flight risk from what Priya wrote to a colleague in what she understood to be a private conversation almost certainly doesn’t satisfy either test.

The National Labor Relations Act

The NLRB has become increasingly clear that AI-driven monitoring systems that chill employees’ rights to discuss working conditions, wages, or collective action implicate Section 7 protections. When an agentic AI flags employees who discuss job market options or express workplace frustrations — and then generates employment recommendations based on those signals — the employer is effectively using surveillance to penalize protected concerted activity. Not intentionally, in most cases. But intent doesn’t determine liability here.

EEOC Guidance on AI in Employment Decisions

The EEOC’s technical guidance on AI and automated decision-making is unambiguous on employer responsibility: you are accountable for discriminatory outcomes produced by AI systems you deploy, whether you built them or bought them from a vendor. If Aria’s retention risk scoring flags employees of a particular demographic disproportionately because of proxies embedded in its training data, that is your legal exposure. Not the vendor’s. Yours.

Colorado’s AI Act and the CPPA’s ADMT Regulations

Effective February 1, 2026, Colorado SB 24-205 imposes affirmative obligations on deployers of high-risk AI systems — defined to include systems making or substantially influencing consequential decisions in employment, financial services, and health care. Covered employers must implement a risk management policy, conduct impact assessments, provide notice to affected individuals, and offer a meaningful opportunity to appeal adverse decisions. Priya’s situation implicates every single one of those requirements.

On the California side, the CPPA’s Automated Decision-Making Technology regulations are moving toward finalization and would require pre-use notices for significant automated decision-making, opt-out rights for certain profiling uses, and cybersecurity audits of covered ADMT systems. Profiling in the employment context is explicitly contemplated. For California-nexus employers, this isn’t coming someday. It’s here.

EU AI Act — The August 2026 Deadline

For multinational employers and vendors with EU-connected operations, the EU AI Act’s high-risk requirements kick in for systems placed on the market after August 2, 2026, for most employment-relevant categories. Employment AI is explicitly listed in Annex III. Article 13’s transparency requirements — meaningful information about the system’s capabilities, limitations, and decision logic provided to deployers and to affected individuals — are not suggestions. And that deadline is closer than most compliance teams currently appreciate.

IV. Why Priya’s Situation Was a Privacy Violation — Even Inside Employer Systems

This is the part where I want to be precise, because I’ve watched too many compliance conversations get derailed by the same bad argument: “We own the systems. Employees have no expectation of privacy on employer equipment.” That argument was always more legally fragile than it sounded, and in the era of agentic AI, it collapses almost entirely.

Helen Nissenbaum’s theory of contextual integrity — developed in her foundational 2004 article published in the Washington Law Review and expanded in Privacy in Context (Stanford University Press, 2010) — gives us the most precise analytical vocabulary available for understanding what actually went wrong with Aria. The core insight is this: privacy isn’t about secrecy in the absolute sense. It’s about appropriate information flows — flows that match the norms of the context in which information was originally shared. When information moves in ways that violate those contextual norms, privacy is violated. Full stop. It doesn’t matter who owns the pipes.

Nissenbaum’s framework identifies five parameters for analyzing whether an information flow is contextually appropriate. Applied to Aria, each one tells the same story from a different angle.

Sender: Priya sent those Slack messages. She was the sender. But she sent them in the context of a peer conversation — one professional thinking out loud to another. When Aria entered the picture, the sender relationship became something entirely different. The AI system was now re-processing, re-synthesizing, and re-transmitting information that Priya had created for a specific, bounded context. Aria effectively became a secondary sender, generating new information flows that Priya never initiated and never had any reason to anticipate.

Recipient: Priya’s Slack message had one intended recipient: a trusted colleague. The actual recipient chain, once Aria was deployed and connected, included the AI processing layer, the vendor’s cloud infrastructure, Marcus’s management dashboard, her direct manager’s inbox via automated nudge, and the project shortlisting algorithm that quietly downweighted her name. Not one of those recipients was within the context she established when she hit send. Contextual integrity broke the moment the platform was given unsupervised access to that channel without employee disclosure.

Information Subject: Priya is the subject of the information — her career deliberations, her professional relationships, her private thinking about what she wants next. She was never given any meaningful role in governing how that information moved. No notice that an AI system was treating her communications as behavioral signals. No opportunity to correct or contextualize the inferences Aria drew. The information subject became passive, invisible, and entirely without agency in a process that generated a material employment consequence for her life. That is not a small thing.

Transmission Principles: The implicit transmission principle governing that Slack message was confidentiality between peers. The employment policy contained no disclosure that an agentic AI system would ingest employee communications and generate risk scores from them. The transmission principle Aria actually operated on was closer to total behavioral surveillance with automated consequence generation. That isn’t a modification of the original norm. It’s its complete inversion. And that inversion is the core of the violation.

Contextual Norms: In professional workplace contexts, established norms define what employers legitimately observe and what employees retain as reasonably private. Performance outputs, attendance, work product — those fall within the employer’s normative purview. Private deliberations about career development, candid expressions of uncertainty shared with a trusted colleague, exploratory thinking that hasn’t yet translated into any workplace action — those do not. Agentic AI systems, deployed without adequate governance, destroy this distinction structurally. Not because they were designed to cause harm. Because they were designed to see everything, and nobody told them which things they weren’t supposed to act on.

The systems were built to observe everything. Nobody told them which things they weren’t supposed to act on.

V. The Policies Your Organization Is Almost Certainly Missing

Here is what most organizations actually have when they deploy agentic AI: an AI Acceptable Use Policy that governs what employees can do with AI tools; a Privacy Policy written for customers; and maybe an Electronic Monitoring Policy drafted for a world of screen-capture software and badge readers. None of those documents address what happens when an AI system operates as an autonomous agent on behalf of the employer, inside the organization’s own data environment, across integrated systems, without discrete human-directed prompts.

The gap isn’t an oversight. It’s a structural failure that most organizations haven’t yet recognized as a failure, because the systems are new and the harms often don’t surface immediately. Your existing policies were not designed for a system that acts across multiple platforms simultaneously, generates inferences and risk scores from behavioral patterns observed in private communications, produces employment-relevant recommendations without a human review checkpoint before those recommendations influence actual decisions, retains observed data in vendor cloud environments on schedules that may not align with your records policies, and functions as a legal agent of the organization — meaning its actions may create employer liability even when no human reviewed them.

Building adequate governance isn’t a single-document exercise. But there are seven things that have to happen, and most organizations haven’t done any of them.

Start with an honest inventory

Before you can govern agentic AI, you need to know what you’ve deployed. Build a current, complete picture of which AI systems in your environment are operating agentically — taking autonomous actions across integrated systems — versus responding to discrete human prompts. For each system: document what data sources it accesses, what actions it can take, what recommendations it generates, and where human review (if any) occurs before those recommendations influence decisions. Most organizations discover gaps in this exercise they didn’t know they had.

Map your data flows against contextual integrity

For each agentic AI system, work through Nissenbaum’s five parameters. Ask: what information is being ingested, from whom, in what original context, and where is it flowing as a result of AI processing? If the answer includes recipients, uses, or purposes that would violate the reasonable expectations of the person who created that information, you have a contextual integrity failure — and in most cases a compliance gap — that requires remediation before the system runs another cycle.

Draft an Agentic AI Governance Policy — separate from your AUP

This is a different document from your AI Acceptable Use Policy, and the distinction matters. An Agentic AI Governance Policy should define which systems the organization authorizes to operate agentically, what data access those systems are permitted to have, which decision categories require human review before any action occurs, what retention and deletion obligations apply to AI-processed employee data, and how the organization responds when the system produces an erroneous, discriminatory, or contextually inappropriate output. It should be reviewed by legal counsel, signed off by your CISO and your CPO, and visible to employees.

Fix your employee disclosure obligations

ECPA exception requirements, NLRA monitoring constraints, Colorado’s AI Act notice requirements, and the CPPA’s ADMT pre-use notice framework all point in the same direction: employees need specific, meaningful notice. Not a generic “we may use technology to monitor work activity” clause buried in an onboarding packet. Employees need to understand what systems are deployed, what data those systems access, what inferences they generate, and what rights employees have to contest adverse recommendations. If you can’t explain that clearly in plain language, that’s your governance gap showing.

Build human review checkpoints that actually work

EU AI Act Article 14, Colorado SB 24-205’s appeal requirement, and EEOC guidance all converge on the same point: automated recommendations that can influence hiring, promotion, performance evaluation, or termination must have a meaningful human review step before they become employer action. And meaningful is doing real work in that sentence. It means the reviewer has access to the underlying data. It means they understand how the recommendation was generated. It means they have genuine authority to override it and will not face organizational pressure to rubber-stamp the system’s output. A manager clicking “approve” on an AI-generated shortlist in thirty seconds is not human oversight. It’s human theater.

Conduct real vendor due diligence

Your vendor contract is not a liability shield. The EEOC’s guidance is explicit: deployers bear responsibility for discriminatory outcomes regardless of who designed the system. Your vendor agreement should address what data the vendor processes and retains, where it’s stored and for how long, what security controls are in place, what bias testing and impact assessment history exists, and what contractual limitations govern how the vendor may use your employees’ data. If your vendor cannot answer those questions specifically and in writing, that is a material governance finding. Treat it as one.

Build an AI incident response protocol before you need it

When — not if — an agentic AI system produces a problematic output, you need a documented response process ready to execute. Who gets notified and on what timeline? How the affected individual is informed. What remediation is available and who authorizes it. How the system is reviewed following the incident? What documentation is preserved for regulatory or litigation purposes? Most organizations will improvise this the first time it comes up. That improvisation is what turns a fixable governance failure into a regulatory investigation or a lawsuit.

VI. What This Moment Actually Requires

The boardroom conversation right now is about AI adoption velocity — how fast can we deploy, how much efficiency can we capture, and how do we keep pace with competitors who are already moving. Those are legitimate questions. I’m not here to argue against progress.

But Priya’s situation — and the dozens of variations of it playing out right now in organizations that have deployed agentic AI without adequate governance — is not fundamentally a technology story. It’s a story about institutional responsibility. About the obligation employers have to the people who work for them to be honest about what is being observed, what inferences are being drawn from that observation, and what consequences those inferences are permitted to produce. Those are not compliance checkbox questions. They are organizational character questions.

Agentic AI is not going away. Its capabilities will deepen, its integrations will expand, and its role in organizational decision-making will grow. The question is whether that growth happens inside a governance framework that takes human dignity and legal compliance seriously — or whether it happens in the policy vacuum that currently exists in most organizations.

The compliance exposure is real. The legal risk is real. And the person sitting across from you in their next performance conversation may have no idea that an AI agent has already formed an opinion about their future at your company.

#DataPrivacy, #AIGovernance, #FutureOfWork #AgenticAI, #EUAIAct

Follow Edward· Subscribe.

Remote Work Privacy Insights is a newsletter that looks at privacy issues in the workplace using academic ideas. It's meant to educate and is not legal advice. For advice tailored to your company, talk to a qualified privacy or employment lawyer. The opinions shared are the author's and not those of any employer.

Primary Sources Cited in This Edition

→ EU AI Act, Regulation 2024/1689 — Annex III, Articles 9, 10, 13, 14: Official EUR-Lex Text

→ NIST AI Risk Management Framework (AI RMF 1.0): airc.nist.gov/RMF

→ Colorado Artificial Intelligence Act, SB 24-205: Colorado General Assembly — Full Bill Text

→ CPPA Automated Decision-Making Technology Regulations: cppa.ca.gov/regulations/autom_decision_making.html

→ ECPA, 18 U.S.C. § 2511: Cornell Law School Legal Information Institute

→ National Labor Relations Act, Section 7: NLRB Key Reference Materials

→ EEOC Technical Guidance on AI in Employment: Assessing Adverse Impact in AI-Driven Selection Procedures

→ Nissenbaum, H. (2004). Privacy as Contextual Integrity. 79 Washington Law Review 119; Privacy in Context: Technology, Policy, and the Integrity of Social Life (Stanford University Press, 2010)